The poor cousin of these is perhaps the idea of System Metaphor. The intention behind XP's System Metaphor practice is to have a common "story that everyone – customers, programmers, and managers - can tell about how the system works." This story often is told in the language of the problem domain, there is no metaphor. (In XP terms this is known as a naïve metaphor)

It was my guess that most systems are developed without a system metaphor (or a naïve one). So I thought I'd do an in depth study.

Less than 20% of devs use the system metaphor. The evidence is damming!

So do we even care about the system metaphor as a practice. If 80% of developers haven't used a system metaphor why would you even bother? Are developers missing a trick?

A world without metaphors.

Metaphors are everywhere and in software land they are impossible to exist without. Why? Because the mechanics of what is actually happening is so far removed from what the typical user can communicate we need to abstract to understand anything. As a software dev, I don't want to have the overhead thinking about how the electrons fly around the computers, copper wires, through the air and I probably don't need to know too much how data gets from my HD into memory for example. Developers think at one level of abstraction, their clients work at another. The divide is there.. too often do stakeholders turn around and complain about the 'jargon' the developers use.

So to allow the computer scientists to communicate with the devs they cooked up data structures. Think about the humble stack.

The stack datatype.

A tangible stack is a set of objects placed on top of each other. To get to an item you need to remove the items above it. The last item you put on the stack is the first item you need to deal with. Now the CS boffins who were dreaming up datastructures came up with a collection of memory locations that were also to be last one in, first out. They could have called it anything (like particle physicists up, down, strange, charm, anyone?) and I bet in CS world there were some scientists that just didn't get the Stack straight away? So what's the best way of explaining a concept? Describe it in terms that explainee underderstands.

A Stack data type is not vertically aligned. It has no physical dimensions. There are holes in the metaphor. As far as I know most "real" stacks don't pop either but the concept is good enough to convey the concept of how the data structure is supposed to function.

The stack is just one example and, to be fair, a stack is a very simple concept. But look around the computer/software world and metaphors are just flippin' everywhere.

In Design Patterns

- repository

- bridge

- adapter

- factory

- decorator

- flyweight

- mediator

- command

- error

- bug

- loop

- concrete class

- file

- queue

- peek

- poke

- pointer

- engine

- layer

- core

- service

- bus

In the os paradigm

- file system

- folders

- recycle bin

- window

- lock

- desktop

- mail box

- firewall

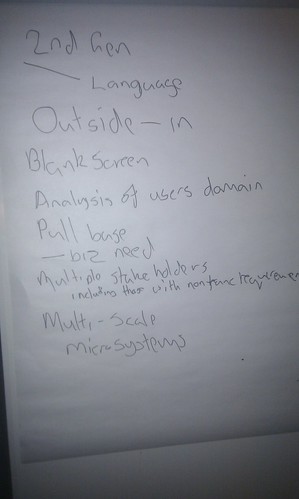

These aren't the sort of systems I build though. I build systems for businesses that help them streamline the business process. My systems have complexity. How can metaphors help us to construct these systems?

A Common Understanding

Imagine you have two sets of people. The first set are a crack programming unit, the second are a group of domain experts. Both are knowledge specialists in their field. The project cannot fail. But when the two sets talk its like one group are from Mars and the other Venus.

You have the option of getting the Martians to speak Venusian or the Venusian's could learn Martian ways. The problem you may come across here is that it takes a lot to learn Martian well. And boy those Venusians lead complicated lives. They are completely alien to those Martians.

There's a third option: imagine if both Martians and Venusians have been observing Earthlings for a while then perhaps they could describe their problems using Earthling ideas and Earthling idioms.

(Earthlings as we know are simple creatures with well defined funny-little habits).

But metaphors are alien to me?

I suppose its quite disconcerting to not plump for the naive metaphor. It seems easy... but is it?

Naive metaphors are a one way street. The dev team may potentially know nothing about the domain it is going to work with. The stakeholders hold all the cards.

In the short term it is probably easier for the delivery team to learn about the problem domain.

What can happen though is that there may be an incomplete transferral of knowledge to the delivery team. I suppose that this unlikely to be too much of a problem in an iterative project. Agile accepts that not everything will be right first time but that doesn't mean we should aim to close the iteration loops.

By introducing a metaphor the knowledge holders have to 'think' about how their domain is relates

to the metaphor. Processes that are 'second nature' need to be be deliberately revisited and described not only in terms of the business stakeholders but in a shared and a discovered paradigm.

This joint learning I feel has some value. It seems strange that this practice is not more popular.